See also the list of contributors who participated in this project. This project is licensed under the MIT License - see the LICENSE.md file for details Author If you'd like to contribute to the project, feel free to submit a PR. We'd love to get feedback on how you're using lambda-resize-image and things we could add to make this tool better. Once it is running, you can see all the requests on your command line.

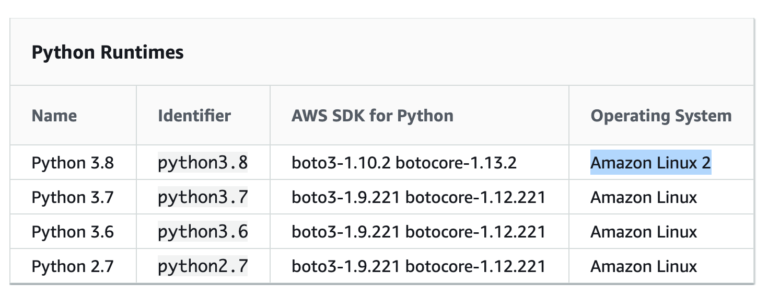

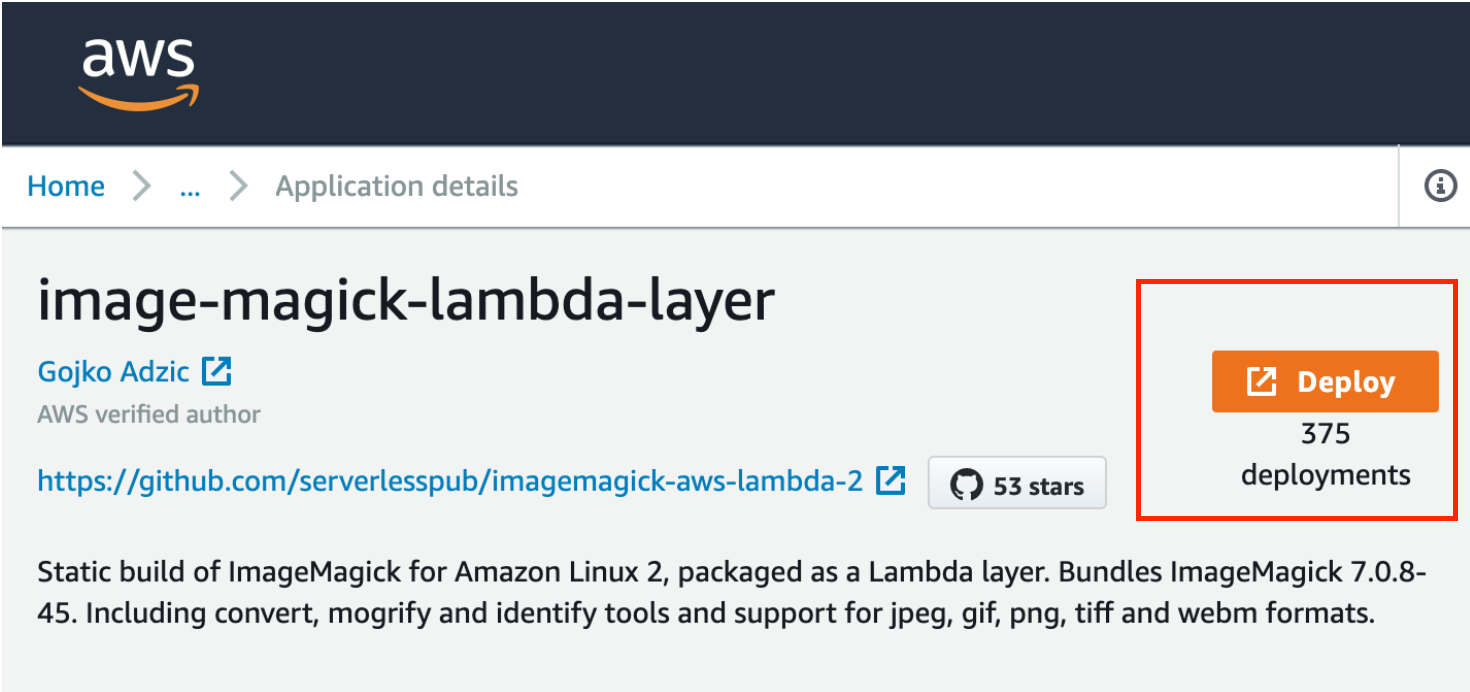

The last command (4.) will spin up an serverless-offline version of an API Gateway, that will simulate the real one. Note that you will need to be into the root repository. URL - AWS URL S3 bucket or your CDN url to the BUCKET.You can also change the url endpoint to another one more tiny and cachable (cloudfront), you can also configure in you Api Gateway (lambda service) a Custom Domain Name. You must have AWS CLI installed to execute a command from your console:Īws apigateway update-integration-response -rest-api-id -resource-id -http-method GET -status-code 200 -patch-operations '[ ImageMagick source code and algorithms are discussed here. Then, we are returning a binary file base 64 image file. AWS Lambda with IM7 Questions and postings pertaining to the development of ImageMagick, feature enhancements, and ImageMagick internals. We've changed our approache to remove redirects from our responses. Node.js - AWS Lambda supports versions of 10.20.1 or above (Recommended: 12.X).When an image is called on AWS Api Gateway, this package will resize it and send it to the S3. The trade off was that it was off system so there is no way we can track if a failure happens in any of the steps but that issue is solvable by having a crontab that checks if any image in the root directory was processed or not, and if not, process it.An AWS Lambda Function to resize images automatically with API Gateway and S3 for imagemagick tasks. This way I not only reduced the time that this particular endpoint took, but I also centralized the logic of image uploads in the whole system, this way it was cleaner, faster and more efficient (since Imagemagick is way more powerful than any PHP built in function).Īlso, since images became lighter and progressive in nature the time to interact with the web page was decreased and the pages loaded faster. I then wrote a nodeJS code to interact with Imagemagick library that:ī - remove any meta data from the image to further decrease its sizeĬ - convert the image into a progressive jpgĭ - resizes the image into 3 different sizes with keeping the aspect ration the same This step function workflow orchestrates the job of multiple Lambda functions. This function invokes the state function workflow, as shown in the image. In my case, I only kept Magick-config, MagickCore-config, MagickWand-config, Wand-config, convert and gs and removed others. So, I installed Imagemagick library into an AWS Layer and used this layer inside a Lambda function that is triggered whenever an Image resource was uploaded into a bucket. For example, a workflow where a user uploads an image which is stored in the S3 bucket triggers a Lambda function 1. Note: If the size of the uncompressed ZIP file is too large and you reach AWS Lambda size limits, remove the binaries that you don't need from the bin/ folder. To solve this issue I firstly wanted to decouple the logic of image processing from the business logic, and then change the way we handled the image processing itself. It was challenging to track the flow since it was an old code base and the above logic was scattered across multiple classes/services This intense IO and image processing with PHP was taking a lot of time, and, on the other hand was producing images with large sizes and wrong formats. A project I was working on had an issue with one of its key API endpoints, the endpoint used to take a lot of time to respond, after investigating I concluded that the reason was the way image resources were handled on the server the image was firstly sent as a parameter to the endpoint, then it was saved to desk as is, after that it was read from disk to be compressed and then it was written again on disk, then it was read from disk again to be resized into three different sizes and converted into the proper format then these three images were sent to a S3 bucket.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed